EightOS Motion Capture Sensors

In 2020 we started working on our own EightOS hardware: motion capture sensors for choreography and music-making. The idea was to create a wearable device that would provide live visual, haptic, and sonic feedback on movement. This feedback could then be used to augment one’s physical experience, help develop new patterns of movement, and also be used to create music using the body as an instrument.

The first prototype was created at the end of 2020 by Koo Des and Thomas Julien who developed the hardware concept, a 3D model, and the basic firmware software. This iteration of the sensor had a built-in Wi-Fi module and could trace the movement via the built-in gyroscope.

The gyroscope data would then be sent via OSC to a computer, which would produce the sound from it. It also had haptic feedback, which would “inform” the mover about the state they are in (received via OSC), so that they are aware of their dynamics and could adjust it based on that feedback. We used those sensors to choreograph EightOS opening ceremony at Gwangju Biennale in Korea. It was particularly interesting to use the device as the choreographic device as it removes the figure of the choreographer. The dancer will simply adjust their movement dynamics based on the feedback from the device, producing a certain physicality which would create interesting emergent properties for the collective movement as well.

In 2021 we took this concept further and developed a built-in dynamics analysis algorithm as well as the improved display interface. It could show the current dynamic state of the mover and also be used to control the device and switch it into various modes of operation.

Thanks to the support we received from the IMPACT New Stages program by Theatre de Liege we could work on improving the software of the sensors. We also asked Johan Gourdin and Elise Hautefaye to help us with the hardware / software development.

In this article, we share the process of creating the sensors and technical details.

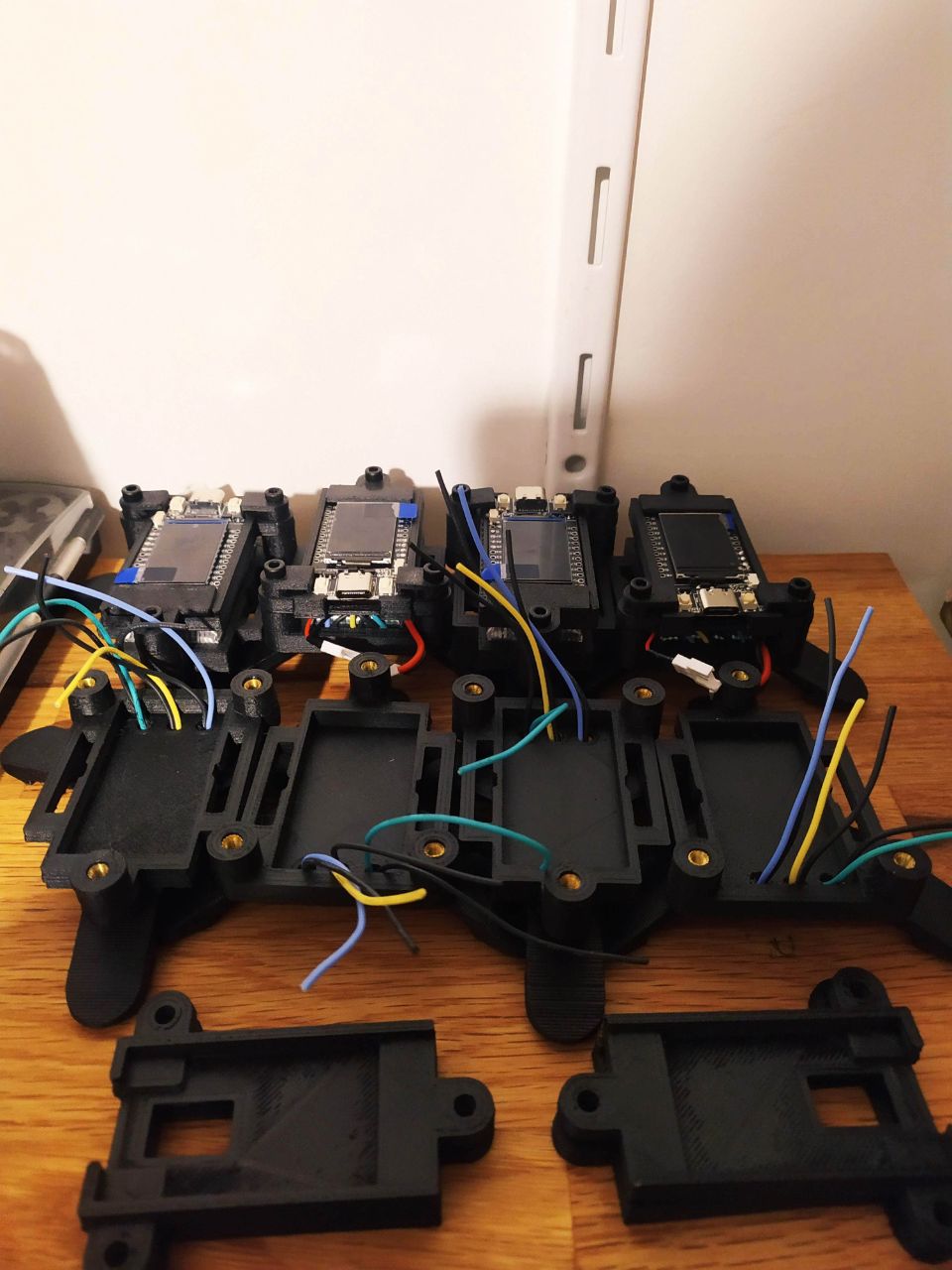

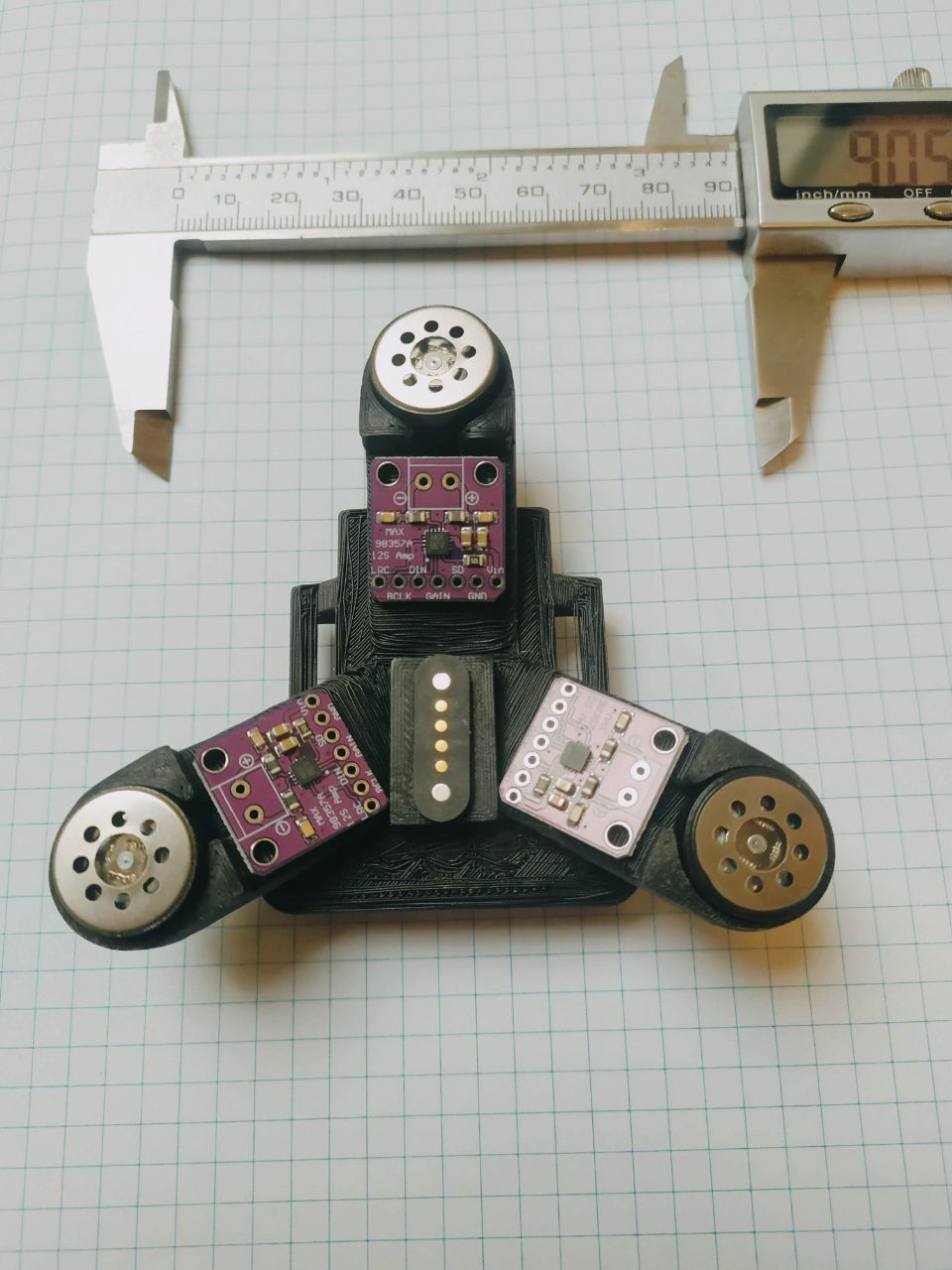

The body of the sensors are made out of plastic and produced using 3D printing technology.

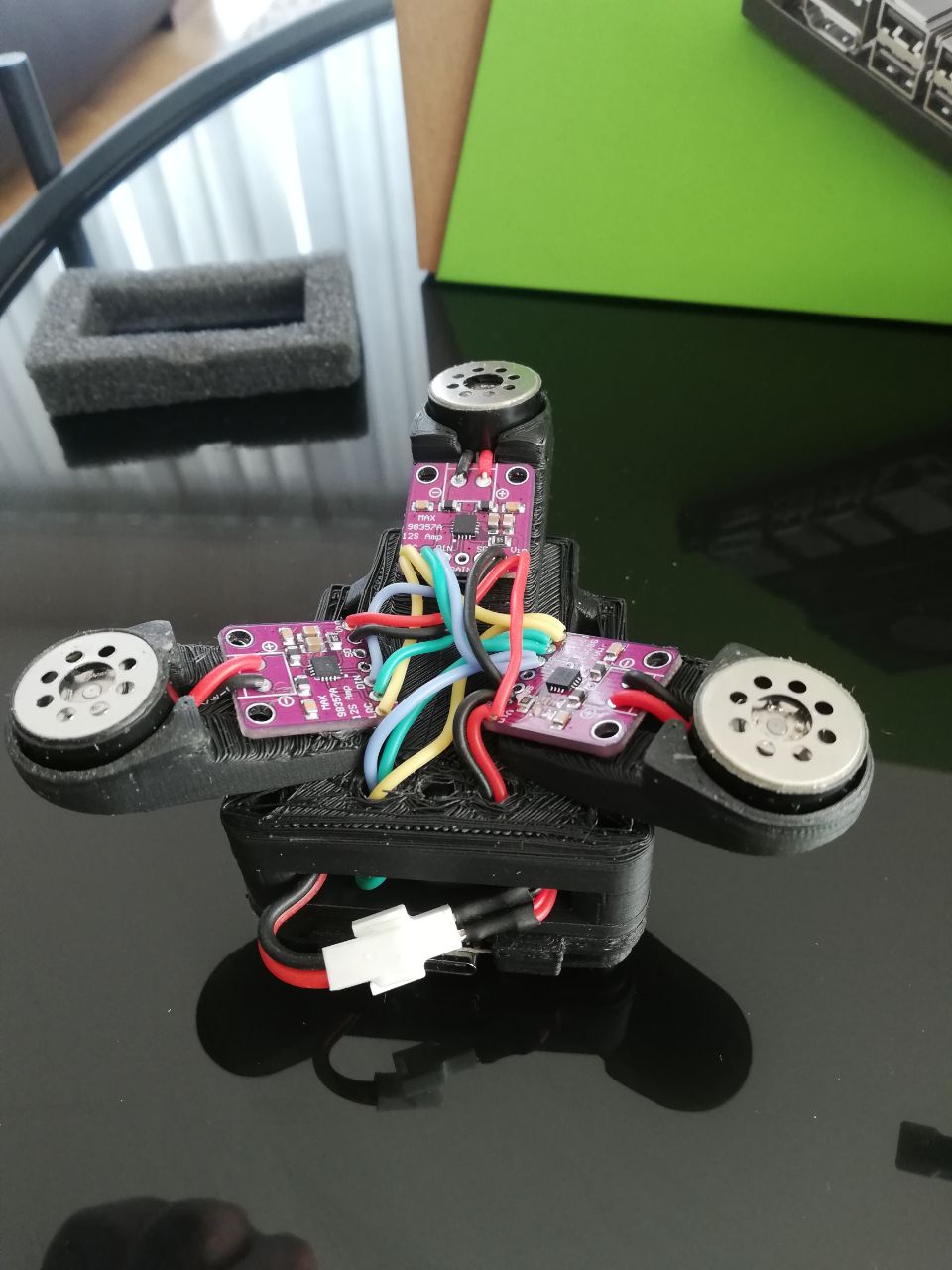

It has three tripod-like legs for stability, which also have conductive wire to produce vibration on every “leg” of the sensor:

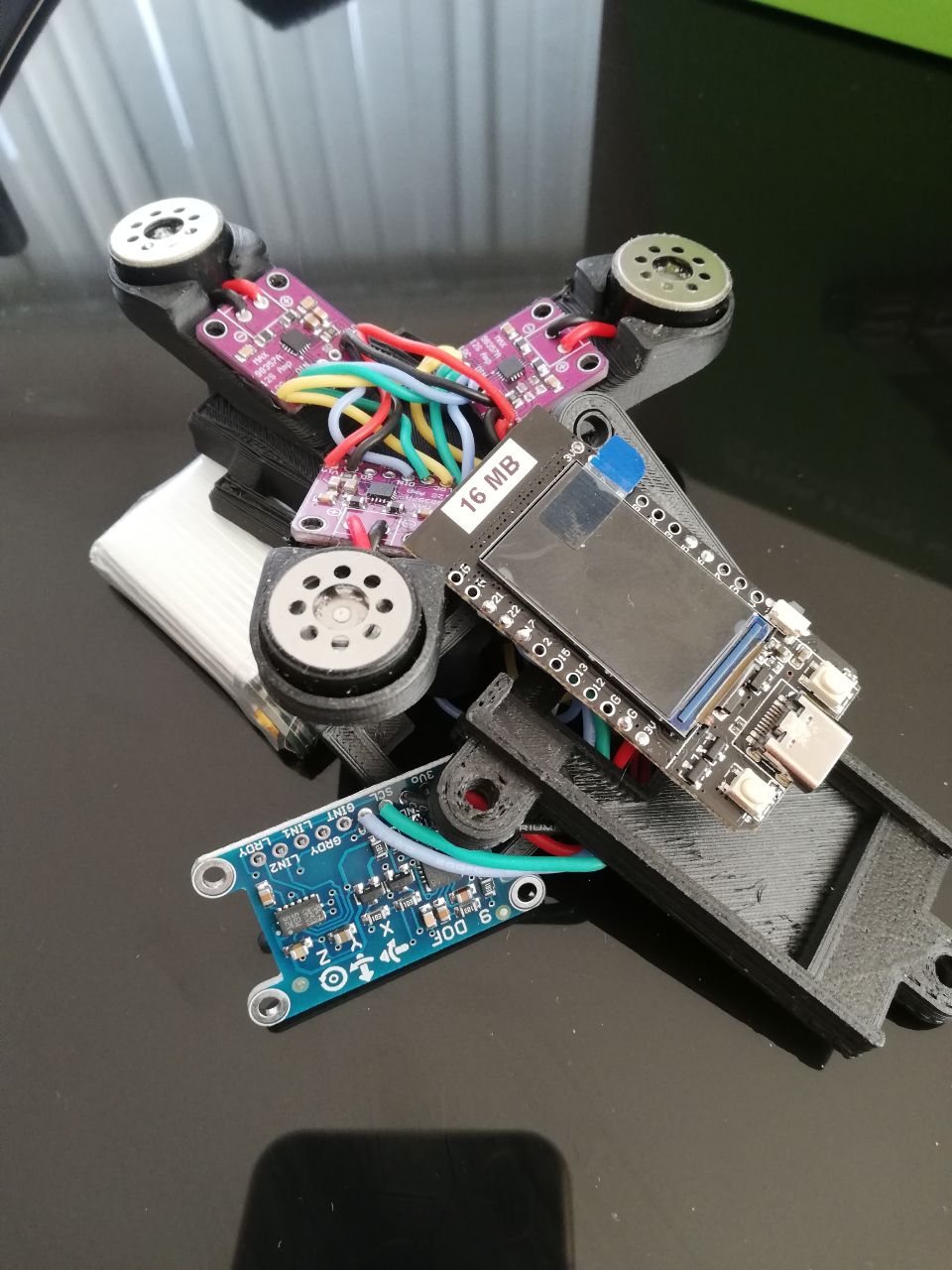

The electronic board inside the sensor holds some important components like gyroscope and wi-fi transmitter / receiver, so that the data can be read, measured, and sent to the other sensors or to a desktop computer via a network. Every sensor can also act as a router, so it can set up its own wi-fi network for every other device to share.

The code is written in C++ and the firmware can be upgraded using a USB cable. As the hardware is Arduino-based, it is pretty simply to control. The code is open source and is available on GitHub: https://github.com/juthomas/Tripodes-firmware

The sensors can be attached to the body using a strap, so it could be worn on any body part: head, arm, leg. It is designed to be resilient to external impact, however, its display is fragile, so we are working on making it stronger and protecting it using a silicon casing.

You can learn more about the sensors and how they work in real life in our article on choreography and music-making using the motion tracking sensors.

This project was supported by IMPACT New Stages program by Théâtre de Liège with the financial support of Rayonnement Wallonie, an initiative of the Wallon Government, operated by ST’ART SA: